A Kairosfocus Briefing

Note:

GEM 06:03:17; this adj. 06:12:16 - 17 to 08:09:28 further adj.to 09:01:04 - 12:06:01, &12: 09:29 Ver 1.7.2c

https://www.angelfire.com/pro/kairosfocus/resources/Info_design_and_science.htm

On

Information, Design, Science, Creation & Evolutionary

Materialism:

Engaging the

Current controversies over the role of information and design in

understanding the origins of the Cosmos, Life, Biodiversity, Mind, Man

and Morality

Is

it possible for any man to behold these things, and yet imagine that

certain solid and individual bodies move by their natural force and

gravitation, and that a world so beautifully adorned was made by their

fortuitous concourse? He who believes this may as well

believe that if a great quantity of the one-and-twenty letters,

composed either of gold or any other matter, were thrown upon the

ground, they would fall into such order as legibly to form the Annals

of Ennius. I doubt whether fortune could

make a single verse of them. How, therefore, can these

people assert that the world was made by the fortuitous concourse of

atoms, which have no color, no quality—which the Greeks call

[poiotes], no sense? [Cicero, THE

NATURE OF THE GODS BK II Ch XXXVII, C1 BC, as trans Yonge

(Harper & Bros., 1877), pp. 289 - 90.]

SYNOPSIS: The raging controversy

over inference to design too often puts out more heat and smoke than

light. However, as Cicero pointed out in the cite just above, the

underlying issues are of such great importance, that all educated

people need to think about them carefully. Accordingly, in the below we

shall examine a cluster of key cases, starting with the often

overlooked but crucial point that in

communication systems, we first start with an apparent

message, then ask how much information is in it. This directly

leads to the question first posed by

Cicero, as to what is the probability that the received

apparent message was actually sent, or is it "noise that got lucky"?

The solution to this challenge is in fact an implicit inference to

design, and it is resolved by essentially addressing the functionality

and complexity of what was received, relative to what it is credible --

as opposed to logically possible -- that noise could do. That is, the

design inference has long since been deeply embedded in scientific

thought, once we have had to address the issue: what is information?

Then, we look at several key cases: informational

macromolecules such as DNA, the related origins of observed biodiversity,

and cosmological finetuning.

Thereafter, the issue is broadened to look at the

God of the gaps challenge. Finally, a technical

note on thermodynamics and several other technical or detail appendices are presented: (p) a critical look at the Dover/ Kitzmiller case (including a note on Plato on chance, necessity and agency in The Laws, Bk X), (q) the roots of the complex specified information concept, (r) more details on chance, necessity and agency, (s) Newton in Principia as a key scientific founding father and design thinker, (t) Fisherian vs Bayesian statistical inference in light of the Caputo case, and (u) the issue of the origin and nature of mind. Cf. Notice

below. (NB: For FAQs

and primers go here. This Y-zine also seems to be worth a browse.)

CONTENTS:

INTRODUCTION

--> ID in a nutshell

-->

ID FAQs and Primers

--> A basic survey of the ID

issue and its significance

--> On research-level ID

topics

A] The

Core Question: Information, Messages and Intelligence

-->

Defining "Intelligent

Design"

--> Three

causal factors: chance,

necessity, agency

--> The design inference explanatory filter

--> Defining

"Intelligence"

--> On "Lucky Noise"

--> Defining "Information"

--> Shannon Info (AKA Informational Entropy) and the link to thermodynamic entropy

--> Defining "Functionally

Specific, Complex Information" [FSCI]

--> Metrics for FSCI (and CSI)

--> Orgel vs Shapiro on getting to origin of life

B] Case

I: DNA as an Informational Macromolecule

--> Dembski's

Universal Probability Bound

--> "Definitionitis" vs. the

case by case recognition of FSCI

--> Of monkeys and keyboards,

updated

--> Implications of the scale of the configuration space of the genome

--> Optimality and structured codon assignments in the DNA code

--> Objections and responses

C] Case

II: Macroevolution and the Diversity of Life

--> the observed fossil record pattern: sudden appearance, stasis, disappearance

--> Defining

"Irreducible Complexity"

--> The

Bacterial Flagellum

--> Macro-

vs. Micro- Evolution

--> Natural selection as a probabilistic culler

vs. an innovator (& the gambler's ruin challenge)

D] Case

III: The evidently Fine-tuned Cosmos

--> Leslie

on convergent fine-tuning

--> On "multiverses"

--> Objections and responses

E] Broadening

the Issue: Persistent "Gaps," Man, Nature, Science and Worldviews

--> On

"Defining" Science

--> The US NSTA's attempted naturalistic redefinition of the nature of science, July 2000

--> Lewontin's materialism agenda in the name of "Science"

--> The NAS-NSTA intervention on science education in Kansas

--> On marking the key distinction between origins and operations sciences

CONCLUSION

APPENDIX I: On

Thermodynamics, Information and Design

--> On the consequences of injecting raw energy into an open system

--> On energy conversion devices and their origin, in light of having FSCI

-->

Brillouin's Negentropy view of

the link between Information and Entropy

-->

A Thought Experiment using "nanobots"

building "a flyable micro-jet" to illustrate the issues

involved in Origin of Life (OOL) and neo-Darwinian-style Macroevolution

in light of thermodynamics

APPENDIX 2: On

Censorship and Manipulative Indoctrination in science education and in

the court-room: the Kitzmiller/Dover Case

-->

Plato on chance, necessity and

agency in The Laws,

Book X

APPENDIX

3: On the source, coherence and

significance of the concept, Complex, Specified Information (CSI)

--> Thaxton,

Bradley and Olsen on the source of CSI

--> Orgel -- the first known

documented use of the term; in

1973

--> Wicken's "wiring diagram" functional organisation and the roots of the term Functionally Specific, Complex Information [FSCI]

--> Trevors and Abel on sequence complexity: OSC,

RSC, FSC

--> Yockey and

Wickens and the source of the term used in this note,

Functionally Specified, Complex Information [FSCI]

--> Of the

creation of tropical cyclones and the origin of snowflakes

--> Dembski's

work and the identification of

the edge of chance

APPENDIX 4: Of chance, necessity, agency, the natural vs. the supernatural vs. the artificial, Kant and Fisher vs Bayes:

APPENDIX 5: Newton's thoughts on the designer of

"[t]his most

beautiful system of the sun, planets, and comets . . . "

in his General Scholium to the Principia

APPENDIX 6: Fisher,

Bayes, Caputo and Dembski

APPENDIX 7: Of the Weasel "cumulative selection" program and begging the question of FSCI, c. 1986

APPENDIX 8: Of the inference to design and the origin

and nature of mind [and thence, of morals]

--> The (modified) Welcome to Wales thought experiment --> Key cites: Liebnitz's mill, Taylor on Wales, Reppert on Lewis' AFR, Shapiro's blind forces golf game, Plantinga on natural selection vs the accuracy of beliefs, Crick's astonishing hypothesis & Philip Johnson's rejoinder, Hofstadter on Godel, Atmanspacher on Quantum theory, randomness and free will, Calef's corrective on mind's influence, the Penrose- Hameroff graviton suggestion, the Derek Smith two-tier controller cybernetic loop model--> Evolutionary materialism and self-referential incoherence

--> Evolutionary materialism and the is-ought gap

--> Implications of the reality of evil etc

--> On the hard problem of consciousness

INTRODUCTION:

The raging controversy over inference to design, sadly, too often puts

out more heat and blinding, noxious smoke than light.

(Worse, some of the attacks to

the man and to strawman

misrepresentations of the actual

technical case for design [and even of the

basic definition of design theory] that have now become a routine distracting rhetorical

resort and public

relations spin tactic of too many of the defenders of the

evolutionary materialist paradigm, show that this resort to

poisoning the atmosphere of the discussion is in some quarters

quite deliberately intended

to rhetorically blunt the otherwise plainly telling force of

the mounting pile of evidence and issues that make the inference to

design a very live contender indeed.)

Be that as it may, thanks to the transforming

impacts of the ongoing Information Technology revolution, information has now increasingly

joined matter, energy, space and time as another recognised fundamental

constituent of the cosmos as we experience it. For, it has become

increasingly clear over the past sixty years or so, that information is deeply embedded

in key dimensions of existence. This holds from the evidently fine-tuned complex organisation

of the physics of the cosmos as we observe it, to the intricate nanotechnology of the

molecular machinery of life [cf. also J Shapiro here!

(NB on AI here,

and on Strong vs Weak AI here

and here

. . . ! )], through the

informational requisites of body-plan level biodiversity, on

to the origin of mannishness

as we experience it, including mind and reasoning, as well as

conscience and morals. So, we plainly must frankly and fairly address

the question of design as a proposed best current

explanation -- and as a paradigm framework for transforming

the praxis of science and thought in general, not just technology --

as, it has profound implications for how we see ourselves in our world,

indeed (as the intensity of the rhetorical reaction noted just now

indicates) it powerfully challenges the dominant evolutionary

materialism that still prevails among the West's secularised

educated elites.

In a nutshell:

The

scientific study of origins helps us probe the roots of our existence.

Unfortunately, some have recently undercut this search by trying to re-define science as a search for “natural causes,” which imposes materialistic conclusions before the facts can speak. However, through objectively studying signs of intelligence -- the intelligent design approach -- we can allow the evidence to speak for itself. For, reliably, functionally specified complex information comes from intelligence. Thus, ID helps us restore balance

to science and to many other aspects of our culture that are shaped by

our views on our origins. [HT: StephenB, a long-standing commenter

at UD. (NB: For basic FAQs and primers on ID-related

topics and issues, kindly go to the IDEA Center, here.

For a layman's level

introduction to the basic design issue go here,

and for a similar layman's level discussion of why it is an important

challenge to the assumptions, assertions and agendas of our secularised

intellectual culture, go here.

For a discussion of

ID-related research and associated topics, go to the Research-ID

Wiki here.)]

However, it is obviously also necessary for us to now pause

and survey in more details on the key facts, concepts and issues, drawing out implications

as we seek to infer the best explanation for the information-rich world

in which we live. That is the task of this briefing.

A]

The Core Question: Information, Messages and Intelligence

Since the end of the 1930's, five key trends have

emerged, converged and become critical in the worlds of science and

technology:

- Information Technology and computers, starting with

the Atanasoff-Berry

Computer [ABC], and other pioneering computers in

the early 1940's;

- Communication technology and its underpinnings in

information

theory, starting with Shannon's

breakthrough analysis in 1948;

- The partial elucidation of the DNA code as

the information basis of life at molecular level, since the 1950s, as,

say Thaxton reports by citing Sir Francis Crick's March

19, 1953 remarks to

his son: "Now we believe that the DNA is a code. That is, the order of

bases (the letters) makes one gene different from another gene (just as

one page of print is different from another)";

- The "triumph" of the

Miller-Urey spark-in-gas experiment, also in 1953,

which produced several amino acids, the basic building blocks of

proteins; but, also, we have seen a persistent failure thereafter to

credibly and robustly account for the origin of life through so-called chemical

evolution across subsequent decades ; and,

- The discovery of the intricate

finetuning

of the parameters in the observed cosmos for life

as we know and experience it -- strange as it may seem: again, starting

in 1953.

The common issue in all of these lies in the

implications of the concepts, communication and information -- i.e. the

substance that is communicated. Thus, we should

now focus on the basic communication system model, as that sets the

context for further discussion:

|

Fig. A.1: A Model of the "Typical" Communication System

|

In this model, information-bearing messages

flow from a source to a sink, by being: (1) encoded, (2) transmitted

through a channel as a signal, (3) received, and (4) decoded. At each

corresponding stage: source/sink encoding/decoding,

transmitting/receiving, there is in effect a mutually agreed standard,

a so-called protocol. [For instance, HTTP

-- hypertext

transfer protocol --

is a major protocol for the Internet. This is why many web page

addresses begin: "http://www . . ."]

However, as the diagram hints at, at each stage noise

affects the process, so that under certain conditions, detecting and

distinguishing the signal from the noise

becomes a challenge. Indeed, since noise is due to a random fluctuating

value of various physical quantities [due in turn to the random

behaviour of particles at molecular levels], the

detection of a message and accepting it as a legitimate message rather

than noise that got lucky, is a question of inference to design.

In short, inescapably, the design inference issue is foundational to

communication science and information theory.

Let us note, too, that similar empirically

testable inferences to intelligent agency are a commonplace

in forensic science, archaeology, pharmacology and a great many fields

of pure and applied science. Climatology is an interesting case: the

debate over anthropogenic climate change is about unintended

consequences of the actions of intelligent agents.

Thus, Dembski's

definition of design theory as a scientific project through

pointed question and answer is apt:

intelligent design begins with a

seemingly innocuous question: Can objects, even if nothing is known

about how they arose, exhibit features that reliably signal the action

of an intelligent cause? . . . Proponents of intelligent design, known

as design theorists, purport to study such signs formally, rigorously,

and scientifically. Intelligent design may therefore

be defined as the science that studies signs of intelligence.

[BTW, it is sad but necessary to highlight what should be obvious:

namely, that it is only common

academic courtesy (cf. here,

here,

here,

here,

here

and here!)

to use the historically

justified definition of a discipline that is generally

accepted by its principal proponents.]

So, having now highlighted what is at stake, we

next clarify two key underlying questions. Namely, what is

"information"? Then, why is it seen as a

characteristic sign of intelligence at work?

First, let

us identify what intelligence

is. This is fairly easy: for, we are familiar with it from the

characteristic behaviour exhibited by certain known intelligent agents

-- ourselves.

Specifically, as we know from experience and reflection,

such agents take actions and devise and implement strategies that

creatively address and solve problems they encounter; a functional

pattern that does not depend at all on the identity of the particular

agents. In short, intelligence is as intelligence does. So, if we see evident active, intentional,

creative, innovative and adaptive [as opposed to merely fixed

instinctual] problem-solving behaviour similar to that of

known intelligent agents, we are justified in attaching the label:

intelligence.

[Note how this definition by functional description

is not artificially confined to HUMAN intelligent agents: it would

apply to computers,

robots, the alleged alien residents of Area 51, Vulcans, Klingons or

Kzinti, or demons or gods, or God.] But also, in so solving their

problems, intelligent agents may leave behind empirically evident signs

of their activity; and -- as say archaeologists and detectives know -- functionally

specific, complex information [FSCI]

that would otherwise be utterly improbable, is one of these signs.

Such preliminary points should also immediately lay to rest the assertion in some quarters that inference

to design is somehow necessarily "unscientific" -- as, such is

said to always and inevitably be about improperly

injecting "the supernatural" into scientific discourse.

(We hardly need to detain ourselves here with the associated claim

that intelligence is a "natural" phenomenon, one that spontaneously

emerges from the biophysical world; for that is plainly one of the

issues to be settled by investigation and analysis in light of

empirical data, conceptual issues and comparative difficulties, not

dismissed by making question-begging evolutionary materialist

assertions. Cf App 6 below. [Also, HT

StephenB, a longstanding commenter at the Uncommon Descent [UD] blog,

for deeply underscoring the significance of the natural/supernatural

issue and for providing incisive comments, which have materially helped

shape the below.])

Now, Dembski's definition just above draws on the common-sense point that: [a] we may quite properly make a significantly different contrast from "natural vs. supernatural": i.e. "natural" vs. "artificial." [Where "natural" = "spontaneous" and/or "tracing to chance + necessity as the decisive causal factors" -- what we may term material causes; and, "artificial" = "intelligent."] He and other major design thinkers therefore propose that: [b] we may then set out to identify key empirical/ scientific

factors (= "signs of intelligence") to reliably mark the distinction.

One of these, is that when we see regularities of nature, we are seeing low contingency, reliably observable, spontaneous patterns and therefore scientifically explain such by law-like mechanical necessity: e.g. an unsupported heavy object, reliably, falls by "force of gravity." But, where we see instead high contingency -- e.g., which side of a die will be uppermost when it falls -- this is chance ["accident"] or intent ["design"].

Then, if we further notice that the observed highly contingent pattern

is otherwise very highly improbable [i.e. "complex"] and is independently functionally specified,

it is most credible that it is so by design, not accident. (Think

of a tray of several hundreds of dice, all with "six" uppermost: what

is its best explanation -- mechanical necessity, chance, or intent? [Cf

further details below.]) Consequently, we can easily see that [c] the attempt to infer or assert that intelligent

design thought invariably constitutes "a 'smuggling-in' of

'the supernatural' " (as opposed to explanation by reference to the "artificial" or "intelligent") as the contrast to

"natural," is a gross error; one that not only begs the question but also misunderstands, neglects or

ignores (or even sometimes, sadly, calculatedly distorts) the explicit

definition of ID and its methods of investigation as has

been repeatedly published and patiently explained by its leading

proponents. (Cf. here

for a detailed case study on how just this -- too often, sadly, less than

innocent -- mischaracterisation

of Design Theory is used by secularist advocates such as the ACLU.)

Further, given the significance of what routinely

happens when we see an apparent message, we know or should know that [d] we routinely and confidently infer from signs of intelligence to the existence and action of intelligence. On this, we should therefore again observe that Sir Francis Crick noted to his son, Michael, in 1953, in the already quoted letter: "Now we believe that the DNA is a code. That is, the order of

bases (the letters) makes one gene different from another gene (just as

one page of print is different from another)."

For, complex, functional messages, per reliable observation, credibly trace to intelligent senders.

This holds, even where in certain particular cases one may then wish to raise the subsequent question: what

is the identity (or even, nature) of the particular intelligence inferred to be the

author of certain specific messages? In turn, this may lead to

broader, philosophical --

that is, worldview level -- questions. Observe carefully, though: [e] such

questions go beyond the "belt" of science theories, proper, into the

worldview-tinged issues that -- as Imre Lakatos reminded

us -- are embedded in the inner core of scientific research programmes,

and are in the main addressed through philosophical

rather than specifically scientific methods.

[It helps to remember that for a long time, what we call "science" today was

termed "natural

philosophy."] Also, I think it is wiser to

acknowledge that we have no satisfactory explanation of a matter,

rather than insist that one will only surrender one's position (which

has manifestly failed after reasonable trials)

if a "better" one emerges -- all the while judging "better" by

selectively hyperskeptical criteria.

In short, those who would make such a rhetorical

dismissal, would do well to ponder anew the

cite at the head of this web page. For, the key insight of Cicero

[C1 BC!] is that, in particular, a

sense-making (thus, functional), sufficiently complex string of digital characters is

a signature of a true

message produced by an

intelligent actor, not a likely product of a

random process. He then [logically speaking] goes

on to ask concerning the evident FSCI in nature, and challenges those

who would explain it by reference to chance collocations of

atoms.

That is a good challenge, and it is one that should

not be ducked by worldview-level begging of serious definitional

questions or -- worse -- shabby rhetorical misrepresentations and

manipulations.

Therefore,

let us now consider in a little more detail a situation where an

apparent message is received. What does that mean? What does it imply

about the origin of the message . . . or, is it just noise that "got lucky"?

- If an apparent message is received, it means that

something is working as an intelligible -- i.e. functional

-- signal for the receiver. In effect, there is a

standard way to make and send and recognise and use messages in some

observable entity [e.g. a radio, a computer network, etc.], and there

is now also some observed event, some variation in a physical

parameter, that corresponds to it. [For instance, on this web page as

displayed on your monitor, we have a pattern of dots of light and dark

and colours on a computer screen, which correspond, more or less, to

those of text in English.]

- Information theory, as Fig A.1 illustrates, then

observes that if we have a receiver, we credibly

have first had a transmitter, and a channel

through which the apparent message has come; a meaningful message that

corresponds to certain codes or standard patterns

of communication and/or intelligent action. [Here, for instance,

through HTTP and TCP/IP, the original text for this web page has been

passed from the server on which it is stored, across the Internet, to

your machine, as a pattern of binary digits in packets. Your computer

then received the bits through its modem, decoded the digits, and

proceeded to display the resulting text on your screen as a complex, functional coded pattern of dots of light and colour. At each stage,

integrated, goal-directed intelligent action is deeply involved,

deriving from intelligent agents -- engineers and computer programmers.

We here consider of course digital signals, but in principle anything

can be reduced to such signals, so this does not affect the generality

of our thoughts.]

- Now, it is of course entirely possible, that the

apparent message is "nothing but" a lucky burst of noise

that somehow got through the Internet and reached your machine. That

is, it is logically and physically possible [i.e.

neither logic nor physics forbids it!] that every

apparent message you have ever got across the Internet -- including not

just web pages but also even emails you have received -- is nothing but

chance and luck: there is no intelligent source that actually sent such a message

as you have received; all is just lucky

noise:

"LUCKY NOISE"

SCENARIO: Imagine a world in which somehow all the "real"

messages sent "actually" vanish into cyberspace and "lucky noise"

rooted in the random behaviour of molecules etc, somehow substitutes

just the messages that were intended -- of course, including whenever

engineers or technicians use test equipment to

debug telecommunication and computer systems! Can you find a

law of logic or physics that: [a] strictly

forbids such a state of affairs from possibly existing;

and, [b] allows you to strictly

distinguish that from the "observed world" in which we think we live?

That is, we are back to a Russell "five-

minute- old- universe"-type

paradox. Namely, we cannot empirically distinguish the world we think

we live in from one that was instantly created five minutes ago with

all the artifacts, food in our tummies, memories etc. that we

experience. We solve such paradoxes by worldview

level inference to best explanation, i.e. by insisting that unless

there is overwhelming, direct evidence that leads us to that

conclusion, we do not live in Plato's

Cave of

deceptive shadows that we only imagine is reality, or that we are

"really" just brains in vats stimulated by some mad scientist, or we

live in a The Matrix

world, or the like. (In turn, we can therefore see just how deeply embedded key

faith-commitments are in our very rationality, thus all worldviews and

reason-based enterprises, including science. Or, rephrasing for

clarity: "faith" and

"reason" are not opposites; rather, they are inextricably intertwined

in the faith-points

that lie at the core of all worldviews. Thus, resorting

to selective

hyperskepticism

and objectionism to dismiss another's faith-point [as noted above!], is

at best self-referentially inconsistent; sometimes, even hypocritical

and/or -- worse yet -- willfully deceitful. Instead, we should

carefully work through the

comparative difficulties

across live options at worldview level, especially

in discussing matters of fact. And it is in that context of

humble self consistency and critically aware, charitable

open-mindedness that we can now reasonably proceed with this

discussion.)

- In short, none of us

actually lives or can consistently live as though s/he seriously

believes that: absent absolute proof to the contrary, we must

believe that all is noise. [To see the force of this,

consider an example posed by Richard Taylor. You are sitting in a

railway carriage and seeing stones you believe to have been

randomly arranged, spelling out: "WELCOME TO WALES." Would

you believe the apparent message? Why or why not?]

Q:

Why then do we believe in intelligent sources behind the web pages and

email messages that we receive, etc., since we cannot ultimately

absolutely prove that such is the case?

ANS:

Because we believe the

odds of such "lucky noise" happening by chance are so

small, that we intuitively simply ignore it. That is, we all

recognise that if an apparent message is contingent [it

did not have to be as

it is, or even to

be at all], is functional within

the context of communication, and is sufficiently complex that it is

highly unlikely to have happened by chance, then it is much better to

accept the explanation that it is what it appears to be -- a message

originating in an intelligent [though perhaps not wise!] source -- than

to revert to "chance" as the default assumption. Technically,

we compare how close the received signal is to legitimate messages, and

then decide that it is likely to be the "closest" such message. (All of

this can be quantified, but this intuitive level discussion is enough

for our purposes.)

In short, we all intuitively and even routinely

accept that: Functionally

Specified, Complex Information, FSCI, is a signature

of messages originating in intelligent sources.

Thus, if we then try to dismiss the study of such

inferences to design as "unscientific," when they may cut across our

worldview preferences, we are plainly being grossly inconsistent.

Further to this, the common attempt to pre-empt the

issue through the

attempted secularist redefinition of science as

in effect "what can be explained on the premise of evolutionary

materialism - i.e. primordial matter-energy joined

to cosmological- + chemical- + biological macro- + sociocultural-

evolution, AKA 'methodological

naturalism' " [ISCID def'n: here]

is itself yet

another begging of the linked worldview level questions.

For in fact, the issue in the communication

situation once an apparent message is in hand is:

inference to (a) intelligent

-- as opposed to supernatural

-- agency [signal] vs. (b) chance-process [noise]. Moreover,

at least since Cicero,

we have recognised that the presence of functionally specified complexity

in such an apparent message helps us make that decision. (Cf.

also Meyer's closely related discussion of the demarcation

problem here.)

More

broadly the decision faced once we see an apparent message, is first to

decide its source across a trichotomy: (1) chance; (2) natural regularity rooted in

mechanical necessity

(or as Monod

put it in his famous 1970 book, echoing

Plato, simply: "necessity");

(3) intelligent agency.

These are the three commonly observed causal forces/factors in our

world of experience and observation. [Cf. abstract of a

recent technical, peer-reviewed, scientific discussion here.

Also, cf. Plato's remark in his The

Laws, Bk X, excerpted below.]

Each

of these forces stands at the same basic level as an

explanation or cause,

and so the proper question is to rule in/out relevant factors at work,

not to decide before the fact that one or the other is not admissible

as a "real" explanation.

This often confusing issue is best initially approached/understood through a concrete example . . .

A CASE STUDY ON CAUSAL

FORCES/FACTORS -- A Tumbling Die: Heavy objects tend to fall under

the law-like natural

regularity we

call gravity. If the object is a die, the face that ends up on the top

from the set {1, 2, 3, 4, 5, 6} is for practical purposes a matter of chance.

But, if the die is cast

as part of a game, the results are as much a product of agency as of natural

regularity and chance. Indeed, the agents in question are

taking advantage of natural regularities and chance to achieve their purposes!

This

concrete, familiar illustration should suffice to show that the three causal factors

approach is not at all arbitrary or dubious -- as some are

tempted to imagine or assert. [More details . . .]

Then also, in certain highly important

communication situations, the next issue after detecting agency as best causal explanation, is whether the detected signal

comes from (4) a trusted source, or (5) a malicious interloper, or is a

matter of (6) unintentional cross-talk. (Consequently, intelligence

agencies have a significant and very practical interest in the

underlying scientific questions of inference to agency then

identification of the agent -- a potential (and arguably, probably

actual) major application of the theory of the inference to design.)

Next, to

identify which of the three is most important/ the best explanation in

a given case, it is useful to extend the principles of statistical

hypothesis testing through Fisherian elimination to create the Explanatory Filter:

|

Fig A.2: The explanatory filter allows for an evidence-based investigation of causal factors. By setting a quite strict threshold between chance and intelligence, i.e. the UPB, a reliable inference to design may be made when we see especially functionally specific, complex information

[FSCI] -rich patterns, but at the cost of potentially ruling "chance"

incorrectly.

UNDERLYING LOGIC: Once the aspect of a process, object or phenomenon under

investigation is significantly contingent, natural regularities rooted in mechanical necessity can plainly be ruled out as the dominant factor for that facet. So, the key issue is whether the observed high contingency is unambiguously evidently purposefully directed; relative to the type and body of experiences or observations that would warrant a reliable inductive inference. For this, the UPB sets a reasonable, conservative and reliable threshold:

Unless (i) the search resources of the observed cosmos would generally be fruitlessly exhausted

in an attempt to arrive at the observed result (or materially similar

results) by random searches, AND (ii) the outcome is [especially

functionally] specified, observed high contingency is by default assigned to "chance."

Thus, FSCI and the associated wider concept, complex, specified information [CSI] are identified as reliable (but not exclusive) signs of intelligence. [In fact, even though -- strictly -- "lucky noise" could account for the existence of apparent messages such as this web page, we routinely identify that if an apparent message has functionality, complexity and specification, it is better explained by intent than by accident and confidently infer to intelligent rather than mechanical cause. This is proof enough -- on pain of self-referentially incoherent selective hyperskepticism -- of just how reasonable the explanatory filter is.]

________________ |

The second

major step is to refine our thoughts, through discussing the

communication theory definition of and its approach to measuring

information. A good place to begin this is with British Communication

theory expert F. R Connor, who gives us an excellent

"definition by discussion" of what information

is:

From a human point of view the word 'communication'

conveys the idea of one person talking or writing to another in words

or messages . . . through the use of words derived from an alphabet

[NB: he here means, a "vocabulary" of possible signals]. Not all words

are used all the time and this implies that there is a minimum number

which could enable communication to be possible. In order to

communicate, it is necessary to transfer information to another person,

or more objectively, between men or machines.

This naturally leads to the definition of the word

'information', and from a communication point of view it does not have

its usual everyday meaning. Information is not what is

actually in a message but what could constitute a

message. The word could implies a

statistical definition in that it involves some selection of the

various possible messages. The important quantity

is not the actual information content of the message but rather its

possible information content.

This is the quantitative definition of information

and so it is measured in terms of the number of selections that could

be made. Hartley was the first to suggest a logarithmic unit . . . and

this is given in terms of a message probability. [p. 79, Signals,

Edward Arnold. 1972. Bold emphasis added. Apart from the justly

classical status of Connor's series, his classic work dating from

before the ID controversy arose is deliberately cited, to give us an

indisputably objective benchmark.]

To quantify the above definition of what is perhaps

best descriptively termed information-carrying

capacity, but has long been simply termed information

(in the "Shannon sense" - never mind his disclaimers . . .), let us

consider a source that emits symbols from a vocabulary: s1,s2,

s3, . . . sn, with

probabilities p1, p2, p3,

. . . pn. That is, in a "typical" long string of

symbols, of size M [say this web page], the average number that are

some sj, J, will be such that the ratio J/M

--> pj, and in the limit attains

equality. We term pj the a priori

-- before the fact -- probability of symbol sj.

Then, when a receiver

detects sj, the question arises as to

whether this was sent. [That is, the mixing in of noise

means that received messages are prone to misidentification.] If on

average, sj will be detected correctly a

fraction, dj of the time, the a

posteriori -- after the fact -- probability of sj

is by a similar calculation, dj. So, we now

define the information content of symbol sj as,

in effect how much it surprises us on average when

it shows up in our receiver:

I = log [dj/pj],

in bits [if the log is base 2, log2]

. . . Eqn 1

This

immediately means that the question of receiving information

arises AFTER an apparent symbol sj has been

detected and decoded. That is, the

issue of information inherently implies an inference to

having received an intentional signal in the face of the possibility

that noise could be present. Second, logs are

used in the definition of I, as they give an additive property: for,

the amount of information in independent signals, si

+ sj, using the above definition, is such that:

I total = Ii + Ij

. . . Eqn 2

For

example, assume that dj for the moment is 1,

i.e. we have a noiseless channel so what is transmitted is just what is

received. Then, the information in sj is:

I = log [1/pj] = - log pj

. . . Eqn 3

This

case illustrates the additive property as well, assuming that symbols si

and sj are independent. That means that the

probability of receiving both messages is the product of the

probability of the individual messages (pi *pj);

so:

Itot = log1/(pi

*pj) = [-log pi] + [-log pj]

= Ii + Ij . . . Eqn 4

So

if there are two symbols, say 1 and 0, and each has probability 0.5,

then for each, I is - log [1/2], on a base of 2, which is 1 bit. (If

the symbols were not equiprobable, the less probable binary digit-state

would convey more than, and the more probable, less than, one

bit of information. Moving over to English text, we can

easily see that E is as a rule far more probable than X, and that Q is

most often followed by U. So, X conveys more information than E, and U

conveys very little, though it is useful as redundancy, which gives us

a chance to catch errors and fix them: if we see "wueen"

it is most likely to have been "queen.")

Further

to this, we may average the information per symbol in the communication

system thusly (giving in termns of -H to make the additive relationships clearer):

- H

= p1 log p1 + p2

log p2 + . . . + pn log pn

or, H = - SUM [pi log pi]

. . . Eqn 5

H,

the average

information per symbol transmitted [usually, measured as: bits/symbol], is

often termed the Entropy; first, historically, because it resembles one

of the expressions for entropy in statistical thermodynamics. As Connor

notes: "it is often referred to as the

entropy of the source." [p.81, emphasis added.] Also,

while this is a somewhat controversial view in Physics, as is briefly

discussed in Appendix 1below, there

is in fact an informational interpretation of

thermodynamics that shows that informational and thermodynamic entropy

can be linked conceptually as well as in mere mathematical

form. Though somewhat controversial even in quite recent years, this is

becoming more broadly accepted in physics and information theory, as

Wikipedia now discusses [as at April 2011] in its article on Informational Entropy (aka Shannon Information, cf also here):

At an everyday practical level the links between information entropy

and thermodynamic entropy are not close. Physicists and chemists are apt

to be more interested in changes in entropy as a system spontaneously evolves away from its initial conditions, in accordance with the second law of thermodynamics, rather than an unchanging probability distribution. And, as the numerical smallness of Boltzmann's constant kB indicates, the changes in S / kB

for even minute amounts of substances in chemical and physical

processes represent amounts of entropy which are so large as to be right

off the scale compared to anything seen in data compression or signal

processing.

But, at a multidisciplinary level, connections can be made

between thermodynamic and informational entropy, although it took many

years in the development of the theories of statistical mechanics and

information theory to make the relationship fully apparent. In fact, in

the view of Jaynes (1957), thermodynamics should be seen as an application

of Shannon's information theory: the thermodynamic entropy is

interpreted as being an estimate of the amount of further Shannon

information needed to define the detailed microscopic state of the

system, that remains uncommunicated by a description solely in terms of

the macroscopic variables of classical thermodynamics. For example,

adding heat to a system increases its thermodynamic entropy because it

increases the number of possible microscopic states that it could be in,

thus making any complete state description longer. (See article: maximum entropy thermodynamics.[Also,another article remarks: >>in the words of G. N. Lewis

writing about chemical entropy in 1930, "Gain in entropy always means

loss of information, and nothing more" . . . in the

discrete case using base two logarithms, the reduced Gibbs entropy is

equal to the minimum number of yes/no questions that need to be answered

in order to fully specify the microstate, given that we know the

macrostate.>>]) Maxwell's demon

can (hypothetically) reduce the thermodynamic entropy of a system by

using information about the states of individual molecules; but, as Landauer

(from 1961) and co-workers have shown, to function the demon himself

must increase thermodynamic entropy in the process, by at least the

amount of Shannon information he proposes to first acquire and store;

and so the total entropy does not decrease (which resolves the paradox).

Summarising Harry Robertson's Statistical Thermophysics

(Prentice-Hall International, 1993) -- excerpting desperately and

adding emphases and explanatory comments, we can see, perhaps, that

this should not be so surprising after all. (In effect, since we do not

possess detailed knowledge of the states of the vary large number of

microscopic particles of thermal systems [typically ~ 10^20 to 10^26; a

mole of

substance containing ~ 6.023*10^23 particles; i.e. the Avogadro

Number], we can only view them in terms of those gross averages we term

thermodynamic variables [pressure, temperature, etc], and so we cannot

take advantage of knowledge of such individual particle states that

would give us a richer harvest of work, etc.)

For,

as he astutely observes on pp. vii - viii:

.

. . the standard assertion that molecular chaos exists is nothing more

than a poorly disguised admission of ignorance, or lack of detailed

information about the dynamic state of a system . . . . If I am able to perceive order, I

may be able to use it to extract work from the system, but if

I am unaware of internal correlations, I cannot use them for

macroscopic dynamical purposes. On this basis, I shall

distinguish heat from work, and thermal energy from other forms

. . .

And,

in more details, (pp. 3 - 6, 7, 36, cf

Appendix 1 below for a more detailed development of

thermodynamics issues and their tie-in with the inference to design;

also see recent ArXiv papers by Duncan and Samura here

and here):

.

. . It has long been

recognized that the assignment of probabilities to a set represents

information, and that some probability sets represent more information

than others . . . if one of the probabilities say p2

is unity and therefore the others are zero, then we know that the

outcome of the experiment . . . will give [event] y2.

Thus we have complete information . . . if we have no basis . . . for

believing that event yi is more or less likely

than any other [we] have the least possible information about the

outcome of the experiment . . . . A remarkably simple and clear

analysis by Shannon [1948]

has provided us with a quantitative measure of the uncertainty, or

missing pertinent information, inherent in a set of probabilities [NB:

i.e. a probability different from 1 or 0 should be seen as, in part, an index of ignorance]

. . . .

[deriving

informational entropy, cf. discussions here,

here,

here,

here

and here;

also Sarfati's discussion of debates and the issue of open systems here

. . . ]

H({pi}) = - C [SUM over i] pi*ln pi, [. . . "my" Eqn 6]

[where

[SUM over i] pi = 1, and we can define also

parameters alpha and beta such that: (1) pi =

e^-[alpha + beta*yi]; (2) exp [alpha] = [SUM

over i](exp - beta*yi) = Z [Z being in effect the

partition function across microstates, the "Holy Grail" of

statistical thermodynamics]. . . .

[H], called the

information entropy,

. . . correspond[s] to the thermodynamic

entropy [i.e. s, where also it was shown by

Boltzmann that s = k ln w], with C = k, the Boltzmann constant, and yi an energy level, usually ei, while [BETA] becomes 1/kT, with T

the thermodynamic temperature . . . A thermodynamic system

is characterized by a microscopic structure that is not observed in

detail . . . We attempt to develop a theoretical

description of the macroscopic properties in terms of its underlying

microscopic properties, which are not precisely known. We attempt to

assign probabilities to the various microscopic states . . . based on a

few . . . macroscopic observations that can be related to averages of

microscopic parameters. Evidently the problem that we attempt

to solve in statistical thermophysics is exactly the one just treated

in terms of information theory. It should not be surprising, then, that

the uncertainty of information theory becomes a thermodynamic variable

when used in proper context . . . .

Jayne's

[summary rebuttal to a typical objection] is ". . . The entropy of a

thermodynamic system is a measure of the degree of ignorance of a

person whose sole knowledge about its microstate consists of the values

of the macroscopic quantities . . . which define its thermodynamic

state. This is a perfectly 'objective' quantity . . . it is a function

of [those variables] and does not depend on anybody's personality.

There is no reason why it cannot be measured in the laboratory." . . .

. [pp. 3 - 6, 7, 36; replacing Robertson's use of S for Informational

Entropy with the more standard H.]

As

is discussed briefly in Appendix 1,

Thaxton, Bradley and Olsen [TBO], following

Brillouin et al, in the 1984

foundational work for the modern Design Theory, The Mystery of Life's Origins

[TMLO], exploit

this information-entropy link, through the idea of moving

from a random to a known microscopic configuration in the creation of

the bio-functional polymers of life, and then -- again following Brillouin

-- identify a quantitative information metric for the information of

polymer molecules. For, in

moving from a random to a functional molecule, we have in effect an

objective, observable increment in information about the molecule. This

leads to energy constraints, thence to a calculable concentration of

such molecules in suggested, generously "plausible" primordial "soups."

In effect, so unfavourable is the resulting thermodynamic balance, that

the concentrations of the individual functional molecules in such a

prebiotic soup are arguably so small as to be negligibly different from

zero on a planet-wide scale.

By many orders of magnitude, we

don't get to even one molecule each of the required polymers per

planet, much less bringing them together in the required proximity for

them to work together as the molecular machinery of life.

The linked chapter gives the details. More modern analyses [e.g.

Trevors and Abel, here

and here],

however, tend to speak directly in terms of

information and probabilities rather than the more arcane world of

classical and statistical thermodynamics, so let us now return to that

focus; in particular addressing information in its functional sense, as

the third step in this preliminary analysis.

As the third major step, we now turn

to information technology, communication systems and computers, which

provides a vital clarifying side-light from another view on how complex,

specified information functions

in information processing systems:

[In the context of computers] information

is data -- i.e. digital representations of raw

events, facts, numbers and letters, values of variables, etc. -- that

have been put together in ways suitable for storing in special data

structures [strings of characters, lists, tables, "trees" etc], and for

processing and output in ways that are useful [i.e. functional]. . . .

Information is distinguished from [a] data: raw events, signals, states

etc represented digitally, and [b] knowledge: information that has been

so verified that we can reasonably be warranted, in believing it to be

true. [GEM, UWI FD12A Sci Med and Tech in Society

Tutorial Note 7a, Nov 2005.]

That is, we

have now made a step beyond mere capacity to carry or convey

information, to the function

fulfilled by meaningful -- intelligible, difference making -- strings

of symbols. In effect, we here introduce into the concept,

"information," the meaningfulness, functionality

(and indeed, perhaps even

purposefulness) of messages

-- the fact that they make a

difference to the operation and/or structure of systems using such

messages, thus to outcomes;

thence, to relative or absolute success or failure of information-using

systems in given environments.

And, such outcome-affecting functionality is of

course the underlying reason/explanation for the use of information in

systems. [Cf. the recent peer-reviewed, scientific discussions here,

and here

by Abel and Trevors, in the context of the molecular

nanotechnology of life.] Let us note as well that since in general

analogue signals can be digitised [i.e. by some form of

analogue-digital conversion], the discussion thus far is quite general

in force.

So, taking these three main points together, we can

now see how information is conceptually and quantitatively

defined, how it can be measured in bits, and how it is used in

information processing systems; i.e., how it becomes functional.

In short, we can now understand that:

Functionally

Specific, Complex Information [FSCI]

is a

characteristic of complicated messages that function in systems to

help them practically solve problems faced by the systems in their

environments. Also, in cases where we directly and independently know

the source of such FSCI (and its accompanying functional organisation)

it is, as a general rule, created by purposeful, organising intelligent agents. So, on empirical observation based induction, FSCI is a reliable sign of such design,

e.g. the text of this web page, and billions of others all across the

Internet. (Those who object to this, therefore face the burden of

showing empirically that such FSCI does in fact -- on observation

-- arise from blind chance and/or mechanical necessity without

intelligent direction, selection, intervention or purpose.)

Indeed, this FSCI perspective lies at the foundation of

information theory:

(i) recognising signals as intentionally constructed messages transmitted in the face of the possibility

of noise,

(ii) where also, intelligently constructed signals have characteristics of purposeful specificity, controlled complexity and system- relevant functionality based on meaningful rules that distinguish them from meaningless noise;

(iii) further noticing that signals exist in functioning generation- transfer and/or storage- destination systems that

(iv)

embrace co-ordinated transmitters, channels, receivers, sources and sinks.

That this

is broadly recognised as true, can be seen from a surprising source,

Dawkins, who is reported to have said in his The Blind

Watchmaker (1987), p. 8:

Hitting upon

the lucky number that opens the bank's safe [NB: cf. here the case in

Brown's The Da Vinci

Code] is the equivalent, in our analogy, of hurling scrap

metal around at random and happening to assemble a Boeing 747. [NB:

originally, this imagery is due to Sir Fred Hoyle, who used it to argue

that life on earth bears characteristics that strongly suggest design.

His suggestion: panspermia

-- i.e. life drifted here, or else was planted here.] Of all the

millions of unique and, with hindsight equally improbable, positions of

the combination lock, only one opens the lock. Similarly, of all the

millions of unique and, with hindsight equally improbable, arrangements

of a heap of junk, only one (or very few) will fly. The uniqueness of

the arrangement that flies, or that opens the safe, has nothing to do

with hindsight. It is specified in advance. [Emphases and parenthetical note added,

in tribute to the late Sir Fred Hoyle. (NB: This case also shows that

we need not see boxes labelled "encoders/decoders" or

"transmitters/receivers" and "channels" etc. for the model in Fig. 1

above to be applicable; i.e. the model is abstract rather

than concrete: the critical issue is functional, complex information,

not electronics.)]

Here, we see how the significance of FSCI naturally

appears in the context of considering the physically and logically

possible but vastly improbable creation of a jumbo jet by

chance. Instantly, we see that mere random chance acting in a context

of blind natural forces is a most unlikely explanation, even though the

statistical behaviour of matter under random forces cannot rule it

strictly out. But it is so plainly vastly improbable, that, having seen

the message -- a flyable jumbo jet -- we then make

a fairly easy and highly confident inference to its most likely origin:

i.e. it is an intelligently designed artifact. For, the a posteriori

probability of its having originated by chance is obviously minimal --

which we can intuitively recognise, and can in principle

quantify.

FSCI

is also an observable, measurable quantity; contrary to what is

imagined, implied or asserted by many objectors. This may be most

easily seen by using a quantity we are familiar with: functionally specific bits [FS bits], such as those that define the information on the screen you are most likely using to read this note:

1 --> These bits are functional, i.e. presenting a sceenful of (more or less) readable and coherent text.

2 --> They are specific,

i.e. the screen conforms to a page of coherent text in English in a web browser

window; defining a relatively small target/island of function by comparison with the

number of arbitrarily possible bit configurations of the screen.

3 --> They are contingent,

i.e your screen can show diverse patterns, some of which are

functional, some of which -- e.g. a screen broken up into "snow" --

would not (usually) be.

4 --> They are quantitative:

a screen of such text at 800 * 600 pixels resolution, each of bit depth

24 [8 each for R, G, B] has in its image 480,000 pixels, with

11,520,000 hard-working, functionally specific bits.

5 --> This is of course well beyond a "glorified common-sense" 500 - 1,000 bit rule of thumb complexity threshold at which contextually and functionally specific information is sufficiently complex that the explanatory filter would confidently rule such a screenful of text "designed," given that -- since there are at most that many quantum states of the atoms in it -- no search on the gamut of our observed cosmos can exceed 10^150 steps:

|

| Fig A.3: The needle- in-the haystack search challenge: the credibly accessible search window for our observed cosmos (< 10^150 states) is a tiny fraction of the configuration space specified by 1,000 or more bits of information storage capacity. |

EXPLANATION: This empirically anchored rule of thumb limit credibly works because the set of locally accessible states

[at ~ 10^43 states/second] for our observed cosmos as a whole [~ 10^80 atoms] across its usually estimated

lifespan [~ 10^25 seconds] is ~ 10^150 states. These states are in large part dynamically (and thus

more or less "smoothly") connected to earlier, neighbouring ones; all

the way back to the big bang and its initial conditions and

constraints.

So, [a]

search in the space of possible states of an abstractly possible

universe is constrained relative to the credible starting conditions of

the observed cosmos; indeed, the observed cosmos will not be able to search out 10^150 states. For instance, MIT Mechanical Engineering professor Seth Lloy'd calculation is that "[t]he

amount of information that the Universe can register and the number

of elementary operations that it can have performed over its history

are calculated. The Universe can have performed 10^120 ops on

10^90 bits ( 10^120 bits including gravitational degrees of

freedom)." [Cf. discussions here and here.

It takes a significant number of such operations to carry out any

process (such as running the general dynamics of the universe), much less to do one step in a search process. So, by far and

away, most of the operations would not be available for a search

process.]

Now, too, it is known that [b]

observed functionality of systems and elements in systems is vulnerable to often quite slight

perturbation, i.e. we deal with islands of function in a sea of

non-function, and also that [c] the functions delimited by 500 - 1,000+ bits of information-storing capacity are complex. This leads to: [d] deep isolation of the islands of function, or at least of the archipelagos in which they may sit.

[This is why for instance, even ardent Darwinists typically fear

exposure to mutation inducing trauma, e.g. chemicals or radiation -- we

all know from observation that dysfunctions such as cancer are the

overwhelmingly likely result from significant random changes to genes or other

functional molecules in the cells of our bodies. Similarly, it fits

well with the fossil record's observed pattern of sudden emergence, structural-functional stasis and disappearance. Likewise, contrary to the urban legend

on monkeys and typewriters, Mr Gates does not write new versions of his

operating system by putting Bonobos to bang away at keyboards, thus

modifying the existing OS at random! (This family of PC operating

systems also shows that effective design is not necessarily "perfect" or

even "optimal" relative to any one purpose or aspect: trade-offs and constraints

are key challenges of real-world design, especially if it has to be

robust against the vagaries of a highly uncertain environment.)]

So,

if [e] we suggest a provisional upper limit for the universal probability

bound based on in aggregate as many functional states as there are

accessible quantum states for our observed comsos, i.e. 10^150, and

[f] isolate the islands and archipelagos at least to the degree that a search window of that many

states are at most 1 in 10^150 of the states in the configuration space set by

the 1,000+ bits [cf. Fig. A.4], then [g] it becomes maximally unlikely

to initially get to the islands of function, or to hop beyond very

local archipelagos, through random search processes culling by degree

of functional success. That sets up 10^300 states as a plausible upper

bound; where also 1,000 bits corresponds to 2^1,000 ~ 1.071 * 10^301

accessible states.

To

see what that means for bio-functionality, consider now a hypothetical

enzyme of 232 amino acid residues [20^232 ~ 2^1,000], where each AA is

of general form H2N-CHR-COOH; proline being the main exception, as its R-group bonds back to the N-atom, making it technically an imino acid.

The "hypothesine" protein would be functional in a specific reaction, and in a particular

cluster of processes in the cell; being useless (or worse than merely

useless) in the wrong cell-process contexts. (For, function is contextually specific.) Now, consider for a simple initial argument that --

bearing in mind that the different R-groups are considerably divergent

in shape, size, reactivity, H-atom locations, tendency to mix with aqueous media, etc. -- on average 150 of the R-groups could

take up any of 10 AA values each in any

combination; the remainder being fixed by, say, the requisites of

key-lock fittting and/or chemical functionality. This would give us a variability of 10^150

configurations that preserve the required specific functionality.

(AT A MORE COMPLEX LEVEL: If,

instead, we model the the individual AA's as varying at random among 4 - 5 "similar" R-group

AA's on average without causing dys-functional change, the full 232-length string

would vary across 10^150 states. As a cross-check, Cytochrome-C, a commonly studied protein of about 100 AA's that is used for taxonomic research, typically varies

across 1 - 5 AA's in each position, with a few AA positions showing more variability

than that. About a third of the AA positions are invariant across a

range from humans to rice to yeast. That is, the observed variability,

if scaled up to 232 AA's, would be well within the 10^150 limit

suggested; as, e.g. 5^155 ~ 2.19 * 10^108. [Cf also this summary of a study of the same protein among 388 fish species.]

Moreover, from Voegel's summary of Hurst, Haig and Freeland, the real-world DNA code evidently exhibits a near-optimal degree of built-in

redundancy

such that typical random single-point changes in three-letter codons

will replace the

R-group with one of a few chemically and/or structurally very

similar

ones; reducing the likelihood of functional deranging of folded

[secondary] and/or agglomerated [tertiary] structures. [Variablity at

random across all 20 AA's is not reasonable; as that would make proline

typically ~ 5% of the changes, and proline's structural rigidity due to

the R-group's bonding back to the N-atom would most likely destroy

desired folding and onward structures, thus deranging functionality. It is

noteworthy, for instance, that sickle-cell anaemia is typically caused by a single point change in a haemoglobin AA sequence.]

Given the

key-lock fitting requisites of working proteins in the cell, this sort

of "close replacement" is credibly responsible for most of the

variability across AA configurations as is studied for say the

Cytosine-C taxonomic trees for life-forms.)

Similarly, a 143-element ASCII text

string (about eighteen typical English words, with provision for spaces

and punctuation) has a contingency space of ~ 2^1,000. Starting from

such a string that is correctly spelled, has correct grammar and is

contextually relevant, it would be quite hard to get 10^150 random

variations of characters that would also be of correct spelling and

grammar, and just as much contextually relevant. It would be even

harder to get to the first such sentence by random chance.

In short, the rule of thumb is plausible and has a reasonable fit to a key context, the biological world.

Ultimately,

though, the warrant for such a rule of thumb is provisional (as are all

significant scientific findings and models) and based on

empirical tests. In effect: can

you identify a well established case of independently known origin

where a functionally specified entity with at least 1,000 bits of

storage capacity has been generated by chance + necessity without the

intelligent intervention of an agent (e.g through an "oracle" in a

genetic algorithm that broadcasts information on and so rewards

closeness to islands of function)? [A routine example of such a

test would be contextually relevant ASCII text in English

embracing 143 or more characters, as 128^143 ~ 2^1,000. That is, the

test is very widely carried out and the rule is strongly empirically supported.]

6 --> So we can construct a rule of thumb functionally specific bit metric for FSCI:

a] Let complex contingency [C]

be defined as 1/0 by comparison with a suitable exemplar, e.g. a tossed

die that on similar tosses may come up in any one of six states: 1/ 2/

3/ 4/ 5/ 6; joined to having at least 1,000 bits of information storage capacity. That is, diverse configurations of the component parts

or of outcomes under similar initial circumstances must be credibly

possible, and there must be at least 2^1,000 possible configurations.

b]

Let specificity [S] be identified as 1/0 through specific functionality [FS] or

by compressibility of description of the specific information [KS] or similar

means that identify specific target zones

in the wider configuration space. [Often we speak of this as "islands

of function" in "a sea of non-function." (That is, if moderate

application of random noise altering the bit patterns will beyond a

certain point destroy function [notoriously common for engineered

systems that require working parts mutually co-adapted at an operating

point, and also for software and even text in a recognisable language]

or move it out of the target zone, then the topology is similar to that

of islands in a sea.)]

c]

Let degree of complexity [B]

be defined by the quantity of bits

to store the relevant information, where from [a] we already see how

500 - 1,000 bits serves as the

threshold for "probably" to "morally certainly" sufficiently

complex to meet the FSCI/CSI threshold by which a random walk from an

arbitrary initial configuration backed up by trial and error is utterly

unlikely to ever encounter an island of function, on the gamut of our

observed cosmos. (Such a random walk plus trial and error is a

reasonable model for the various naturalistic mechanisms proposed for

chemical and body plan level biological evolution. It is worth noting

that "infinite monkeys" type tests have shown

that a search space of the order of 10^50 or so is searchable so

that functional texts can be identified and accepted on trial and

error. But searching 2^1,000 = 1.07 * 10^301 possibilities for islands

of function is a totally different matter.)

d] Define the vector {C, S, B}

based on the above [as we would take distance travelled and time

required, D and t: {D, t}], and take the element product C*S*B [as we

would take the element ratio D/t to get speed].

e] Now we identify the simple FSCI metric, X:

C*S*B = X,

. . . the required FSCI/CSI-metric in [functionally] specified bits.

f] Once we

are beyond 500 - 1,000 functionally specific bits, we are comfortably

beyond a threshold of sufficient complex and specific functionality

that the search resources of the observed universe would by far

and away most likely be fruitlessly exhausted on the sea of

non-functional states if a random walk based search (or generally

equivalent process) were used to try to get to shores of function on

islands of such complex, specific function.

[WHY: For, at 1,000 bits, the 10^150 states scanned by the observed universe acting as search engine would be comparable to: marking

one of the 10^80 atoms of the universe for just 10^-43 seconds out of

10^25 seconds of available time, then taking a spacecraft capable of

time travel and at random going anywhere and "any-when" in the observed

universe, reaching out, grabbing just one atom and voila that atom is

the marked atom at just the instant it is marked. In short, the

"search" resources are so vastly inadequate relative to the available

configuration space for just 1,000 bits of information storage capacity

that debates on "uniform probability distributions" etc are moot: the whole observed universe acting as a search engine could not put up a credible search of such a configuration space. And,

observed life credibly starts with DNA storage in the 100's of kilo

bits of information storage. (100 k bits of information storage

specifies a config space of order ~ 9.99 *10^30,102; which vastly

dwarfs the ~ 1.07 * 10^301 states specified by 1,000 bits.)]

7 --> For instance, for the 800 * 600 pixel PC screen, C = 1, S = 1, B = 11.52 * 10^6, so C*S*B = 11.52

* 10^6, FS bits. This is well beyond the threshold. [Notice that if the

bits were not contingent or were not specific, then X = 0

automatically. Similarly, if B < 500, the metric would indicate the

bits as functionally or compressibly etc specified, but

without enough bits to be comfortably beyond the UPB threshold. Of

course, the DNA strands of observed life forms start

at about 200,000 FS bits, and that for forms that depend on others for

crucial nutrients. 600,000 - 10^6 FS bits is a reported reasonable

estimate for a minimally complex independent life form.]

8 --> A more sophisticated (though sometimes controversial) metric has of course been given by Dembski, in a 2005 paper, as follows:

define

ϕS as . . . the number of patterns for which [agent] S’s

semiotic description of them is at least as simple as S’s

semiotic description of [a pattern or target zone] T. [26] . . . .

where M is the number of semiotic agents [S's] that within a context of

inquiry might also be witnessing events and N is the number of

opportunities for such events to happen . . . . [where also] computer

scientist Seth Lloyd has shown that 10^120 constitutes the maximal

number of bit operations

that the known, observable universe could have performed throughout

its entire multi-billion year history.[31] . . . [Then] for any

context of inquiry in which S might be endeavoring

to determine whether an event that conforms to a pattern T happened

by chance, M·N will be bounded above by 10^120. We thus define

the

specified complexity

[χ] of T given [chance hypothesis] H [in bits] . . . as [the negative base-2 log of the conditional probability P(T|H) multiplied by the number of similar cases ϕS(t) and also by the maximum number of binary search-events in our observed universe 10^120]

χ

= – log2[10^120

·ϕS(T)·P(T|H)].

9 --> When 1 >/= χ,

the probability of the observed event in the target zone or a similar

event is at least 1/2, so the available search resources of the observed cosmos across its estimated lifespan

are in principle adequate for an observed event [E] in the target zone to credibly occur by chance. But if χ

significantly exceeds 1 bit [i.e. it is past a threshold that as shown

below, ranges from about 400 bits to 500 bits -- i.e. configuration

spaces of order 10^120 to 10^150], that becomes increasingly

implausible. The

only credibly known and reliably observed cause for events of this last

class is intelligently directed contingency, i.e. design. Given the scope of the Abel plausibility bound for our solar system, where available probabilistic resources

qΩs = 10^43 Planck-time quantum [not chemical -- much, much slower] events per second x

10^17 s since the big bang x

10^57 atom-level particles in the solar system

Or, qΩs = 10^117 possible atomic-level events [--> and perhaps 10^87 "ionic reaction chemical time" events, of 10^-14 or so s],

. . . that is unsurprising.

10

--> Thus, we have a rule of thumb informational X-metric and a more

sophisticated informational Chi-metric for CSI/FSCI, both providing

reasonable grounds for confidently inferring to design. As will be shown below, both

rely on finding a reasonable measure for the information in an item on

a target or hot zone -- aka island of function where the zone is set

off observed function -- and then comparing this to a reasonable

threshold for sufficently complex that non-foresighted mechanisms (such

as blind watchmaker random walks from an initial start point and

leading to trial and error), will be maximally unlikely to reach such a

zone on the gamut of resources set by our observable cosmos. the Durston et al metric helps us see how that works.

11 --> Durston, Chiu, Abel and Trevors provide a third metric, the Functional H-metric in functional bits or fits, a functional bit extension of Shannon's H-metric of average information per symbol, here. The

way the Durston et al metric works by extending Shannon's H-metric of

the average info per symbol to study null, ground and functional states

of a protein's AA linear sequence -- illustrating and providing a

metric for the difference between order, randomness and functional

sequences discussed by Abel and Trevors -- can be seen from an excerpt

of the just linked paper. Pardon length and highlights, for clarity in

an instructional context:

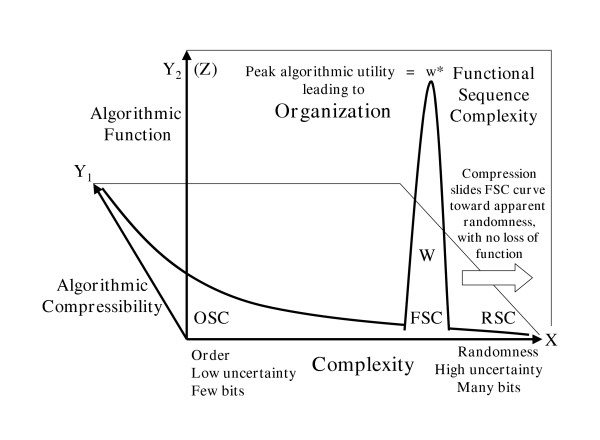

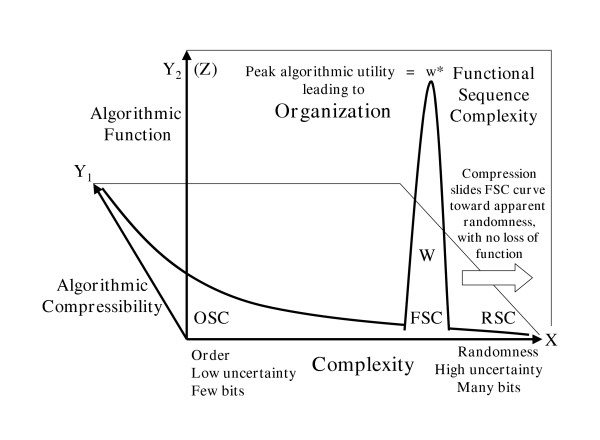

Abel and Trevors have delineated three qualitative aspects of linear digital sequence complexity [2,3],

Random Sequence Complexity (RSC), Ordered Sequence Complexity (OSC) and

Functional Sequence Complexity (FSC). RSC corresponds to stochastic

ensembles with minimal physicochemical bias and little or no tendency

toward functional free-energy binding. OSC is usually patterned either

by the natural regularities described by physical laws or by